Setting up shop for data science in 2026

Tools, portfolio, certifications and ALL-mighty AI

Setting up shop for data science in 2026

Last year, I wrote about how you can set up shop as a data analyst. You can read it here:

This one is on data science.

Borrowing a part from the first article: As with every new year, there’s a buzz about new beginnings and every other thing: resolutions, fitness, color of the year, fashion trends, start-up ideas, beauty, entertainment, tech breakthroughs, AI, health, businesses, new sign-ups, and every other initiative you can think of.

The common theme across all of it is DATA.

We love what we do – using data to generate insights that help businesses save money and make an impact, and yet, not all of us have the opportunity to apply these skills.

The job market has been painfully brutal. It’s crowded and referral-driven. Not to mention, so many jobs are scam posts.

In this article, I’ll be sharing how I try to build systems that compound, whether I get hired or not. Basically, setting up shop, buyers or not.

1. Tools. not what you think.

To me, tools don’t really differentiate you. Systems do. What you can do with these tools is what differentiates you.

I follow some extremely amazing MICROSOFT EXCEL WIZARDS on LinkedIn. These women are building machines using Excel formulas. Insane financial models that can stand tornadoes.

I know we like to dump on Excel, but i want to believe we know its capabilities. Have you ever watched any of the WORLD EXCEL CHAMPIONSHIP? If you haven’t, please watch one and be prepared to have your mind blown.

What’s my point? Python, SQL, Pandas, Scikit-learn, cloud, Excel, Tableau: you have to show end-to-end thinking.

Your minimum stack should go from notebooks to scripts and modules. For Git, branches, PRs, and readable commit history. Then you can add some edge tools that tell people: this is a practitioner, not a student.

E.g.

duckdb – did you know you can use duckdb to scale local analytics?

dbt – I think every data person should know the basics of dbt. A little engineering is a lot of advantage.

Mlflow, weights, biases – try to experiment with tracking literacy.

Your portfolio should show implementation. Use those csv(s), models, and charts to implement.

2. GitHub. Still not what you think.

I have an upcoming series on Github and Git, and I think you will like it.

If you work with data, GitHub is necessary. Think open-source and learning, contributing, and building with others.

But, rebrand your GitHub. Let it be a lab, instead of a “junk space”, you know, everything is a repo...

Have:

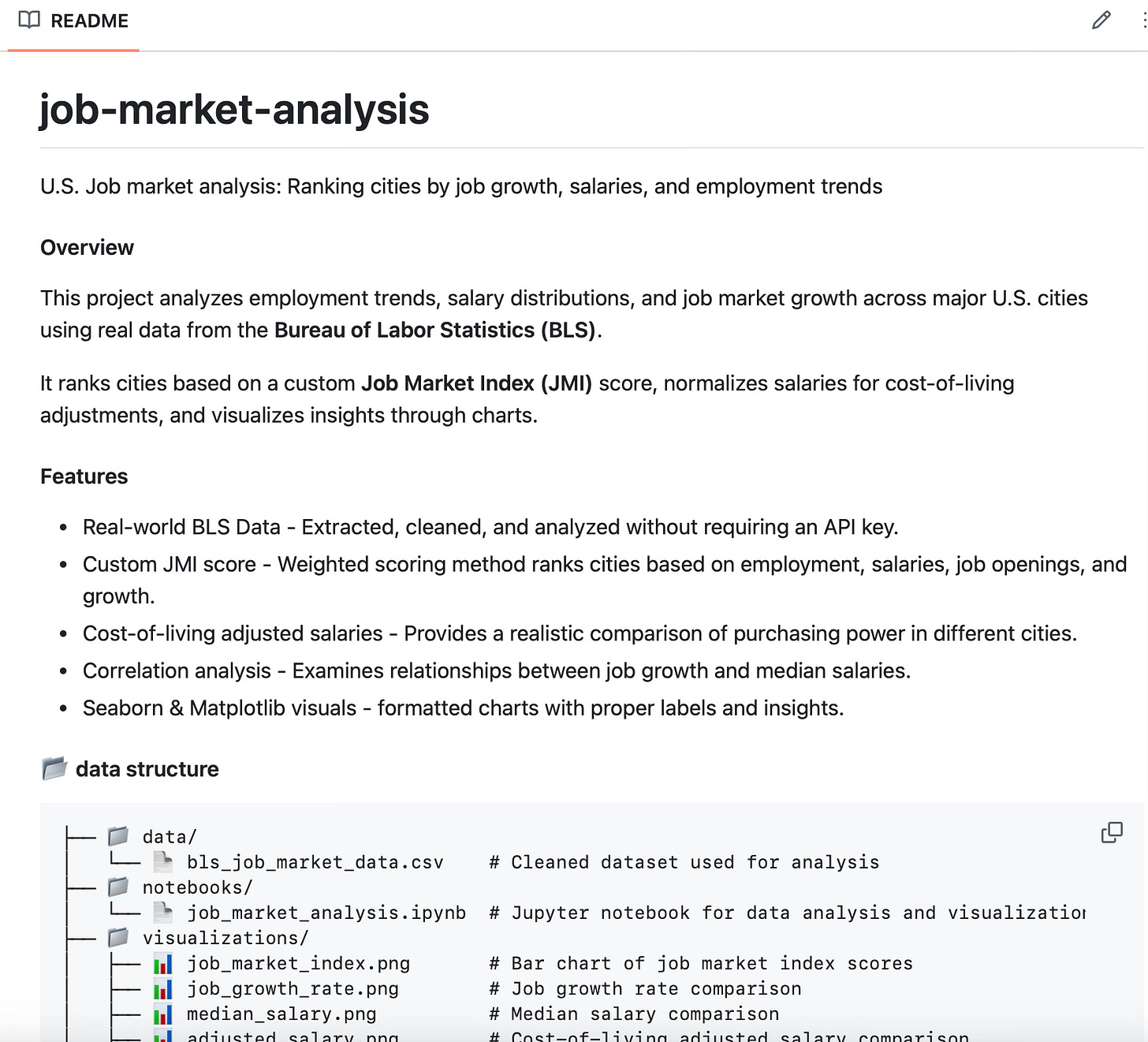

One flagship project: it can be boring but let it be real. Something with a start, an end, and a result. Just like in the real world. e.g., customer churn, pipeline rebuilds, data ingestion, clear README. Like this one here:

Small but useful utilities. I have a data cleaning script I created years ago that still comes in clutch. You should have a reusable EDA template, a feature engineering helper, or even a model script. Small but functional and practical.

Evidence of iteration. Have an iteration script for initial baseline or checking leakages. As always, people will jump at the idea of not having to start from scratch, and now you’ve solved a problem.

3. Portfolios. Maybe not what you think?

You should have a portfolio of 3-5 projects (the recommended number). And they have to be optimized for decisions and outcomes.

It sounds simple, but this is why I paused on building Tableau dashboards. As much as I was learning with it, many of them had no value.

For 2026:

Try to build something with a distinctive value proposition. Take advantage of that one tool and create something.

try rebuilding a model and see what worked and what didn’t.

What happened when you moved from notebook to script? Show that.

E.g.:

I started the year by creating this 2026 publishing system/tracker.

Forecasting demand with constraints like inventory, staffing, and cost

Classification with class imbalance

Time series analysis

As you build, think about how this would be useful in real life.

4. Certifications

Certifications: they are great, but you just have to back them up. Sadly, certifications may not get you the jobs you want, but they still show you’ve done the work. They reduce skepticism and can be helpful in recruiting processes because they provide a baseline for a role.

Some of the ones I think are really great:

Cloud practitioner (associate level)

DBT certification if data engineering interests you

Domain-specific terms like health, finance, etc.

I’d say don’t lead with certs; show value, and use them to support what you can do.

5. All-mighty AI

Tech is an AI space now. I don’t know another way to say it, but it is crazy. So be different:

- Know when AI is wrong

- Use it to your advantage, but don’t lie to yourself

- Create better workflows and refine your prompts

- ETHICAL AI, even when it’s inconvenient

You know the famous line: “you are more likely to be replaced …” LOL.

I’m sure you know it. Moving on.

6. These might still not get you the job

That’s the point of this article. With the brutal job market, you might still not get the job. I’ve been upskilling for 3 years, and still don’t have a permanent role.

It’s not you, it’s them. But this works because it positions you as an authority. You can speak about data science even in your sleep.

It moves you a step out of the crowd, and tbh, motion might be the one thing that compounds in this market.

builds leverage

attract other collaborators

make referrals easier

and increases confidence in your skills

Closing out with the last part from the first article:

Imagine you’ve been contacted by a small e-commerce start-up preparing for the 2026 sales seasons.

Before the rush, they want to identify their best-selling products, understand purchasing patterns, and forecast demand.

1. You set up automated data pipelines in AWS to pull daily sales and marketing data.

2. Next, using Python and Pandas, you clean and merge the data.

3. After that, you run a clustering analysis in R or Python to segment customers by buying behavior - grouping high-value repeat customers separately from one-time bargain hunters.

4. Finally, you use Tableau to create a dashboard that shows sales trends, top-performing product lines, and predictive models that forecast the inventory levels.

5. Then you it on Github.

With this in place, when January and the new year come around, you’re ready. Your templates, scripts, dashboards, and workflows are already built.

Instead of starting from scratch, you update data sources and fine-tune parameters. The client sees instant value, no lengthy onboarding, but immediate, actionable strategies.

That’s the value in setting up shop.

Enjoyed this? Read, comment, and subscribe for more.

Be data-informed, data-driven, but not data-obsessed

🔗 Biz and whimsy: https://linktr.ee/amyusifoh

🔗 Free design toolkit: dashboard design toolkit

Data Scientist⬩ Spreadsheet advocate ⬩ Freelancer ⬩ Turning data into useful insights

Thank you Ame for your lessons learned, and practical application of them for those of us in Analytics.